Nvidia’s ACE technology brings AI avatars into real-time voice and facial interactions with players.

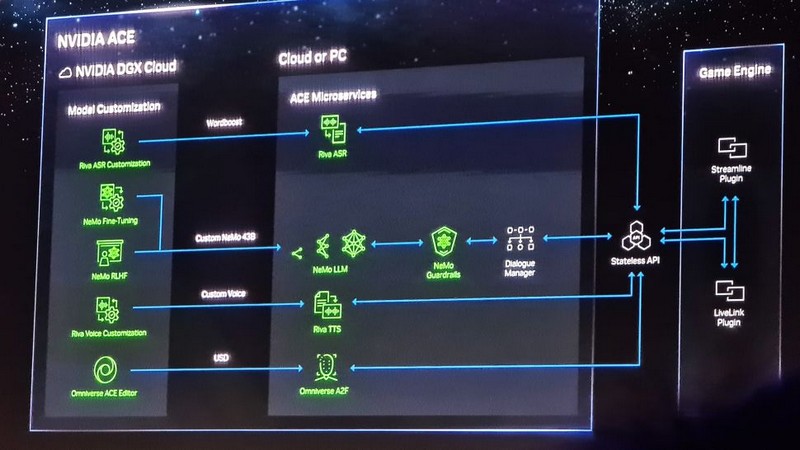

Nvidia just announced ACE for Games, a version of the Omniverse Avatar Cloud Engine, with the aim of animating and providing voices for NPCs in the game in real time. Nvidia CEO Jensen Huang explained that ACE for Games integrates text-to-speech, natural language capture, and automatic facial expression animators.

Basically, the AI-generated NPC will listen to the player’s input. For example, the player asks the NPC a question, then the AI generates the answer according to the NPC’s settings and creates the NPC’s facial expression when they say the above line out loud. The CEO also showcased the technology through a real-time demo built in Unreal Engine 5 with an AI startup called Convai. The demo is set in a cyberpunk setting, following the player to a ramen shop and talking to the shop owner. Although the shop owner didn’t have scripted dialogue, the NPC answered the player’s questions in real time and gave the player a temporary mission.

The demo certainly left an impression on many people and opened up a avenue for how games could use this technology in the future. Mr. Huang commented: “AI will be a very important part of the future of video games.” So what will developers need to run the ACE for Games demo? Two indispensable things will be ray-tracing and DLSS. This technology may require more than an average GeForce GPU to operate or require a cloud-based component. More information about this tool will probably be revealed when some games actually use it in development. So far, Nvidia has confirmed two games that use ACE for Games’ facial expression technology, called Audio2Face. Those two games are STALKER 2: Heart of Chernobyl and Fallen Leaf, but hopefully Nvidia will give the public some examples soon when the full ACE for Games is in use.